Hundred dollars a kg

To sum up what's gone before: Dollar a gallon gasoline .. Penny a kWh electricity.

To solve the energy crisis using space-based solar power, replacement power needs to come on line at a rate of 400-500 GW/year. That replaces about 6 million barrels of oil a day each year over the next 50 years. To make liquid fuels at a dollar a gallon out of electricity requires that the electricity cost be around a penny a kWh. To make penny a kWh requires lift cost to GEO of $100 or less

How much lifted to GEO

Four hundred GW of power sats at 2kg/kW requires 800,000 tons per year lifted to GEO (perhaps twice that if power sat mass can't be reduced below 4kg/kW). At least there is economy of scale.

Do we have to lift that much mass? No. Extra terrestrial resources are a better idea, but there isn't time to develop the space industry to tap them. The economic effects (including famines) of the developing energy crisis are so bad that the large scale production of SBSP needs to come on line by 2015 to avoid economic collapse. Expect ET resources to be exploited by 2025 with this much activity in space.

This works out to lifting 1-200 tons per hour. Will physics let us get under $100/kg? A moving cable space elevator only requires about 15 kWh to lift a kg from the surface of the earth to GEO. This is $1.50 at the high rate of 10 cents a kWh. Even if it cost $100 billion dollars to build, a capital cost of $10 billion a year lifting a billion kg is only ten dollars per kg. Unfortunately we don't have the cable – yet.

There are other choices but the ones further examined here are among rockets, lasers or some combination of them.

Rockets vs lasers

Rockets are good for high thrust, but the rocket equation[W] is a hard taskmaster when you need delta V that is a multiple of the exhaust velocity.

Laser ablation propulsion[W] is not as well developed as rockets. Laser thrusters can get very high exhaust velocity, leading to small mass ratios, but they don't do very good for high thrust. Jordin Kare[W] and others have been working for decades designing lasers to launch from the surface.

Rockets to lift this much cargo would have to launch every hour, each rocket having a lift off mass of 6,000 tons to get 350 tons to LEO and 100 tons of that to GEO. The projected cost to GEO is around $500/kg. http://www.ilr.tu-berlin.de/koelle/Neptun/NEP2015.pdf

The energy payback (for making fuel) is about 40 days1. The cost of rockets is not in the fuel, but in the high cost of aerospace hardware and the rocket equation which dictates that even for the most energetic fuels only about one part in sixty of the liftoff mass is payload delivered to GEO.

The needed delta V LEO to GEO is about 4.1 km/sec. Using 10% of the LEO payload for reaction mass (35 tons) and a laser that provides a 40 km/sec exhaust velocity will produce about 4.2 km/sec delta V (the Tsiolkovsky rocket equation again).

The power required for 1/20th g (.5 m/sec exp 2) is 1/2 x 350,000 kg x .5m/sec exp 2 x 40,000 m/sec = 3.5 GW

Delta V changes require 4100 m/sec /0.5 m/sec exp 2, or 8200 sec or about 2.7 hours. I.e., 3.5 GW of laser power would raise eight loads a day from LEO to GEO. At 315 t each that is 2500 t/day, somewhat exceeding the 2200 t/day needed to install 400 GW per year.

It also cuts the rocket launches from 24 to a more manageable but still excessive 7 or 8.

A 3.5-GW space based laser built at GEO that massed 10,000 tons would cost $5 billion to lift by rockets and $35 billion for the laser. It can be expected to more than triple the yearly throughput to GEO, saving 2/3 of 0.8 billion kg x $500/kg or $267 billion a year in transport costs.

Amortized at 10 percent, the additional lift from LEO to GEO would cost $4 billion/0.8 billion/kg or $5 per kg plus the lift to LEO.

Cost reduction

There is another way that cuts the liftoff mass by a factor of five compared to rockets.

Injection to geosynchronous transfer orbit is 7.8 k/s (to LEO), 2.5 k/s (to GTO) plus 1.6k/sec to circularize at GEO, totaling 11.9 km/s. (See Delta-v_budget) To get to LEO takes 796 s at 1g, 13.2 minutes. At one g 17.5 minutes for GTO, 14 minutes at 1.25 g, plus 2.2 minutes to circularize the orbit (1.6 km/s) at GEO. Assuming half payload and half reaction mass, 0.69 velocity ratio and 11.9 km/s delta v, then the average exhaust velocity needs to be a modest (for lasers) 17.2 km/s.

Because the laser can be cycled close to 4 times an hour, the payload only needs to be 25 tons. Taking the midpoint (the exhaust velocity would be varied for constant thrust) the power required for 1.25 g (12.25 m/sec exp 2) is 1/2 x 37,500 kg x 12.25 m/sec exp 2 x 17,200 m/sec = 4 GW. In the context of building a GW of power satellite a day, this is a small piece of hardware. The choice of building the laser in space or on the ground has not been fully examined.

The 50-ton payload-plus-reaction-mass has to hang in space long enough to be accelerated. This takes a modest mass ratio rocket.

| 50 tons payload plus reaction mass for the laser | 16.5% |

| 50 tons rocket structure | 16.5% |

| 200 tons propellant. | 66% |

The rocket would lift off on two SSMEs (or similar), and if a zeroth turbofan stage was used, it would take eight 50-ton thrust engines, perhaps with afterburners. Simplifying operations, it goes straight up and lands back at the launching site. If the payload is oriented at right angles to vertical, we could avoid wasting time reorienting it for laser acceleration.

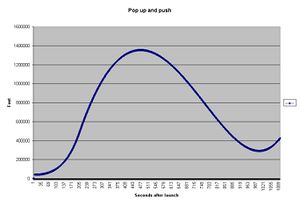

This "little" rocket (about the mass of a 747) has a mass ratio of two and a delta V of 4 k/sec (less gravity loss and drag). The payload-plus-reaction-mass exits the atmosphere at 2.1 k/sec, goes up to 260 miles, falls to 150 miles before picking up orbital velocity and falls to 55 miles (picking up a little air drag) before losing downward velocity. It enters GTO 1000 seconds after launch. This is conservative, not accounting for the curvature of the earth.

It masses 1/20th of a Neptune and (with the aid of the laser) delivers 1/4 as much payload per launch. One part in 12 (rather than one part in 60) is payload. To keep the laser busy and to meet the 100-ton per hour cargo requirement requires a launch every 15 minutes (or every 7.5 minutes for 200 tons per day). This rate is common for airlines, but not for spacecraft.

If the laser cost 40 billion dollars and was written off at 10% per year, the lift cost from sub orbital to GEO would be $4 billion /0.8 billion kg, $5/kg plus the sub orbital flight of perhaps $60/kg of payload. This reaches the penny a kWh and dollar a gallon fuel goals.

Energy payback is 8 days for the rocket fuel and 8 days for the laser. This is about 8.4% of the theoretical minimum for a space elevator.

This is probably not the optimum design. A shorter "hang time," smaller payloads and higher but shorter acceleration may yield lower cost per kg. (It is possible that laser launch from the ground is the end point of this optimization.) However, the size of the largest part for a power satellite may limit the minimum size of rocket.

Other uses for a laser

There is an awful lot of space junk, 15,000 pieces at least. Power satellites built in LEO and tranported to GEO on high impulse engines have been proposed. Even back in the late 1970s when there was a lot less space junk it was realize that something the size of a power sat would arrive at GEO full of holes. A propulsion laser can be used to deorbit space junk.